Many quants around the world are trying to develop equity market neutral strategies because the premise behind them sounds appealing. However, these strategies have generated dismal returns in the last 11 years even when the 2008 bear market is included. In this article we offer a brief account of the reasons of underperformance.

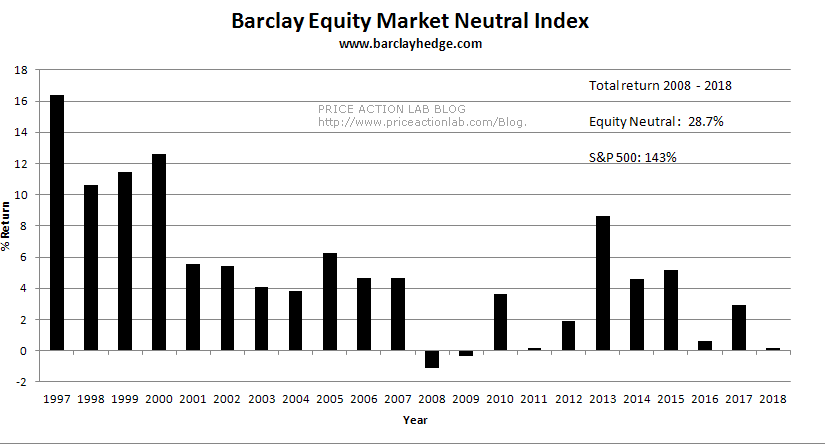

Results of equity neutral funds have been dismal in the last 11 years according to data collected by Barclay Hedge on more than 100 funds.

Although on the average these funds navigated the financial crisis bear market with a small loss in the order of 1%, total return since 2008 has been about 29% versus 143% for S&P 500, as shown in the above chart. For investors who believe in the longer-term uptrend of equity markets, these funds offer no advantage and are drag on profitability. For more risk-averse investors, equity market neutral funds can provide some hedge during times of turmoil at the expense of longer-term alpha.

The limiting case of an equity market neutral strategy is a pairs trade with a long and short leg. This is a risky bet because even though the strategy is long and short equal dollar amounts, both positions can lose money simultaneously: the security in the long leg may fall in price while the price of the one in the short leg may increase. This strategy has large exposure to unsystematic risk, or risk specific to a company or industry. Imagine being short Walmart on Thursday, August 16, 2018, with the stock rising 9.3% due to a report of fastest sales growth in USA. The key to avoiding a high impact of these tail events is diversifying out unsystematic risk.

But diversify by how much? Go long 100 stocks and short 50 stocks with equal dollar amounts, or long 500 stocks and short 100 stocks with again equal dollar amounts? Or have the same number of long and short stocks with equal position size? This essentially becomes an optimization problem and opens the door to data-mining bias. There are a few parameters to determine:

- Number of long stocks

- Number of short stocks

- Position size

- Determining ranking factors for long and short

- Look-back period for factors to determine long and short

- Any other parameters included in the model

This is essential a highly non-linear optimization problem and in the mind of some quants it is suitable for applying supervised machine leaning algorithms. But optimization is the basis of these algorithms: they actually minimize or maximize some objective function. While this seems to work well in applications where the features are spatially separated (for example machine vision), with temporal separation this turns out to be a very difficult problem to solve.

Here is an approach of a well-known quant fund: they eliminate variables 1 and 2 above (the number of long and short stocks), and potentially number 3, by considering a very large universe of more than 1000 stocks. Such investment obviously is suitable to large funds and rules out retail traders. But it has a drawback.

Briefly, as the number of securities increases, although variance of return decreases by the square root of that number, returns also decrease in an inverse relationship. Is it possible to establish a minimum value of the return so that the strategy is economically viable? This answer is also complicated and depends on:

- Transaction cost and other frictions

- Quality of features (alpha factors) used for ranking

- Predatory algo behavior

- Market conditions (volatility, trend, etc.)

If we assume that transaction cost is kept low, we are left with the other three important problems to solve.

Quality of features

Many quant long/short strategies are over-fitted and due to data-snooping (repeated use of data in development phase), they show good statistical significance. Note that over-fitted strategies are not necessarily bad as many, especially in academia, think; they may work well until market conditions change. If that takes a few years, then these strategies can generate large returns. CTA performance is a good example of this: the large returns of the 80s and 90s generated by over-fitted strategies on historical data (mostly break-out signals) evaporated in subsequent years as market conditions changed and the result has been dismal performance. The decrease in influx of dumb money (mostly chart traders) amplified the problem.

Identifying quality features with economic value requires a lot of hard work not too many are willing or qualify to put. Many quants think that machine leaning magically solves the problem when in fact, it is a GIGO process: garbage-in, garbage-out. Having features with economic value equates to a license to print money up to a certain point dictated by liquidity constraints. Most features identified by data-mining have negative economic value and those based on unique hypotheses (for example fundamental factors) suffer from small samples. This is a difficult problem. The way I have approached this problem is by identifying features based on anomalies in price action. Under certain conditions determined mainly by the integrity of the data-mining process, these features can provide economic value. Machine learning can be used as a meta-model to select the most suitable securities to trade long or short, conditioned on expect profitability. You do not want to use machine learning to determine expected profitability in the first place because in most cases it will be a spurious result. Here is an example of an analog:

If you would like to buy a good pair of shoes, you do not go to stores with cheap imports. You must first identify the universe of good brands and the stores that sell them. These brands have features with high value for the problem. After you identify these brands, let us say 1 through 10, you can use machine learning as a meta-model to classify them and select one or two with the highest probability to suit you. If you try to use machine learning to find shoes from all available brands, chances are you will end up with something mediocre in terms of quality but at an attractive price. But what you want is the best price from the good brands not from all brands.

Predatory algo behavior

Influx of dumb money in markets has decreased so at this point predatory algos attempt to profit from funds including quant. There may be strategies to neutralize equity market neutral strategies by pumping or dumping randomly stocks usually held in their portfolios. We have witnessed in the last few years tail events in the returns of large cap stocks and I will just mention again the 9.3% rise in WMT last week. While that might have hurt only those equity market neutral strategies that were short WMT, if these tail events are repeated with a large number of stocks, they may eventually hurt many strategies by inducing friction in returns. A few lucky ones will benefit but the returns of the majority of strategies will decrease.

There are also other ways that predatory algos can impact the profitability of equity market neutral strategies: if liquidity is low for example, the predatory algos, usually HFT, can lift bids and induce large slippage to execution. Limiting the universe to very liquid stocks minimizes the friction affects at the expense of diversification but cannot avoid tail events in returns. One way or the other, predatory algos will get most quant strategies and profit from their losses.

Market conditions

Trading strategies fail when market conditions that contribute to their profitability change. As it turns out, knowing market condition and when they start to change is more important than any quantitative claims about strategy statistical significance. In a paper I have provided the rationale and examples for this claim. If quants do not know the market conditions that are the driver of profitability of a strategy, then nasty surprises are possible. Market conditions have many faces although the primary two are momentum and mean-reversion.

In the last few years equity market volatility has remained overall at very low levels as compared to the past. Equity market neutral strategies that benefited from that may face drawdown when volatility increases. Testing the strategies in periods of higher volatility is required to assess their robustness.

Summary

Quant equity market neutral funds may serve as the next source of profitability for market makers and predatory algos after the decrease in the influx of human dumb money, primarily chart traders. Developing robust strategies depends on the quality and economic value of the features used to rank securities and that is far from a trivial task. Outsourcing the task to a large number of competent programmers who lack market experience may lead to an increase in data-mining and the selected strategies to trade may suffer from bias. At the same time, increased diversification diminishes return potential. Quants may need to avoid generic advice, especially coming from academia, and focus on sources of idiosyncratic alpha.

If you found this article interesting, I invite you follow this blog via any of the methods below.

Subscribe via RSS or Email, or follow us on Twitter

If you have any questions or comments, happy to connect on Twitter: @mikeharrisNY

Charting and backtesting program: Amibroker

Technical and quantitative analysis of Dow-30 stocks and 30 popular ETFs is included in our Weekly Premium Report. Market signals for longer-term traders are offered by our premium Market Signals service.