Joe has thought of a way that combines technical analysis indicators, exit signals, and filters of all kinds and also optimizes performance by selecting the best parameters. He further thought that if this is done in-sample historical data, followed by validation of the results out-of-sample, then there is a good chance of finding something that can make him money. But unless Joe does a lot more than his program can do he has no chance because he is being fooled by randomness.

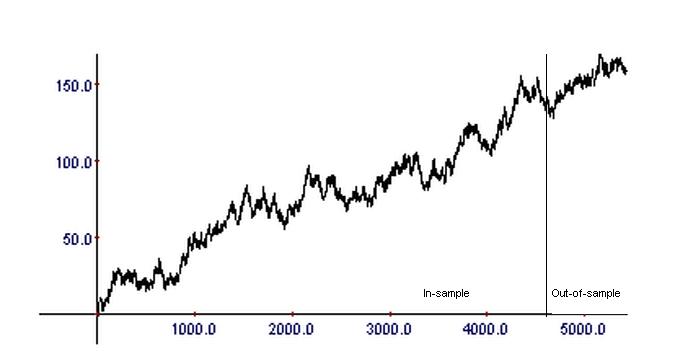

Joe tried to build a system for trading ES mini futures with a program he built using a very sophisticated algorithm that tests millions, billions, or even trillions of combinations of indicators, exits, filters, and formulas. The program generated 187 alternative systems that were profitable in the in-sample. One of those systems, number 98, was also highly profitable in the out-of-sample. Its equity curve looked similar to the one shown in the chart below.

Joe was really happy to find a system that was profitable in the in-sample and also tested profitable in the out-of-sample. But Joe was fooled by randomness through selection bias. The above equity curve was generated after just 5 trials by tossing a coin 5427 times with a payout equal to +1 for heads and -1 for tails.

Joe was fooled by randomness because he does not understand probability. If one tests millions of combinations of systems, the probability that several of them will pass out-of-sample validation tests is very close to 1. This becomes worse if the runs are repeated with added parameters and thus data snooping is involved in the process. Joe must do many more things than out-of-sample validation to avoid being fooled by randomness. What must be done is not by any means fixed because different people have different approaches to a solution to this problem. But all the different approaches constitute what quant trading funds and sophisticated quant traders consider as their edge.

The validation schemes below have limited or no effectiveness when trading strategies are suggested by the data:

Out-of-sample tests: These tests if used more than a few times with the same data cause data-snooping bias, i.e., out-of-sample becomes essentially in-sample

Monte-Carlo simulations: Most over-fitted strategies pass the Monte-Carlo simulation because they are robust by design. These tests are good only for rejecting, not accepting strategies.

Tests on a portfolio of securities selected post-hoc: Some developers fool this validation test by selecting post-hoc the portfolio that generates positive performance and in this way introducing bias. The portfolio must be standard and selected in advance.

If you found this article interesting, you may follow this blog via RSS, Email, or Twitter.

Quantitative analysis of Dow-30 stocks and popular ETFs is included in our Weekly Premium Report. Market signals for longer-term traders are offered by our premium Market Signals service.