In this article we present a scheme for taking advantage of multiple CPU cores when running searches on long files with Price Action Lab. Using this scheme the search time is reduced by a factor equal to the number of running instances. Only a few extra steps are required to get the final results and tools for achieving that in a fast and efficient manner will be included in version 6.0 of the software.

The scheme that will be discussed requires the ability to start and run multiple instances of the program in numbers equal to those of available CPU cores. For example, if there are 4 CPU cores available, the program user can start 4 instances of the program. This scheme also requires that each instance searches a data file that is reduced in length by a number of bars N that is equal to the number of bars in the Depth Search divided by the number of available cores time the instance index. The formula for that is as follows:

Bars removed from the end of file (n-1) used in (n -1)th instance, N = (n-1) * (Depth Search)/n (1)

where n = 1,2,…,n = number of instances running in n cores. The effective multiple instance Search Depth, Sd, is given by

Sd = Search Depth/n (2)

where n is the number of cores, which is also equal to the number of instances.

For example, in the case of SPY daily data and 2 instances running on two CPU cores, based on a desired Depth Search of 500 bars, equation (1) yields:

For core 1 and file 1:

N = (1 -1) * 500/2 = 0

For core 2 and file 2:

N = (2 -1) * 500/2 = 250

Thus, the file used by core 1 remains as is and the file used by core 2 is reduced by 250 bars. The effective Search Depth is given by equation (2) as:

Sd = 500/2 = 250 bars

To summarize, instead of running one search using the whole data file and a Search Depth of 500 bars we can run two searches using two data files, the first is the original file used by the first instance and the second is the original file reduced from its end by 250 bars. In both cases the Search Depth is set to 250 bars.

Now, there is a caveat here: Since by design Price Action Lab tests all patterns found in the whole data file used in the search, some patterns found in the reduced file could end up not satisfying the criteria on the workspace had the full file been used. This can be taken care of by combining the results, testing the patterns on the whole data file and removing those that do not fulfill the workspace criteria, which are the same for all instances. This extra step can take a few minutes compares to hours or even days saved from implementing the multiple runs scheme.

An example

The best way to illustrate the multiple instance scheme is by an example. We will first look at the single search results and the time they took and then implement and run the multiple instance scheme and compare the results. Hopefully, they will match.

Single instance search

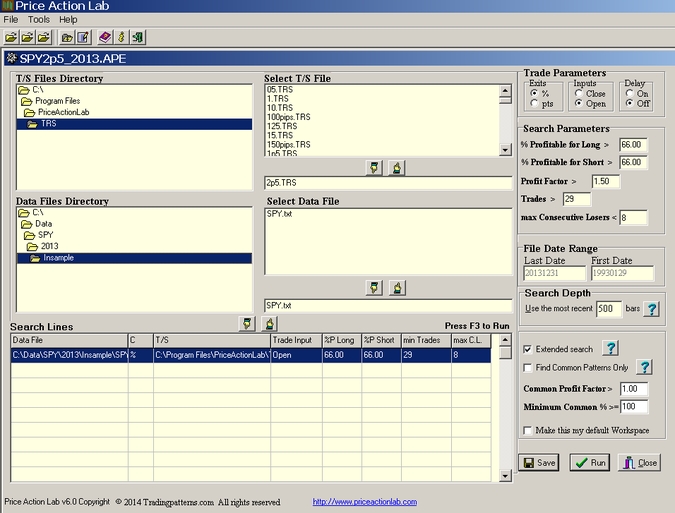

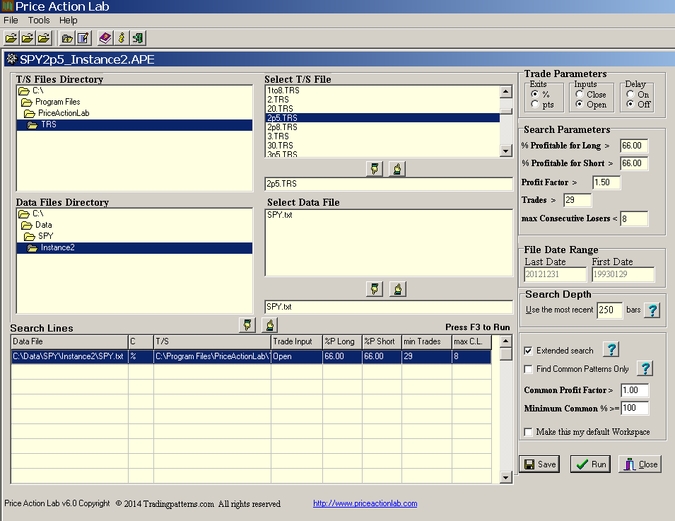

This will be a search for price patterns in SPY daily (unadjusted) data since inception to the end of 2013. The workspace with the parameters is shown below:

It may be seen that the search will be executed from 01/29/1993 to 12/31/2013, for 2.5% target and stop and we are looking for patterns with 66% win rate and above, profit factor greater than 1.5, having at least 30 trades and less than 8 consecutive losers. An extended search will be executed over a Search Depth of 500 bars.

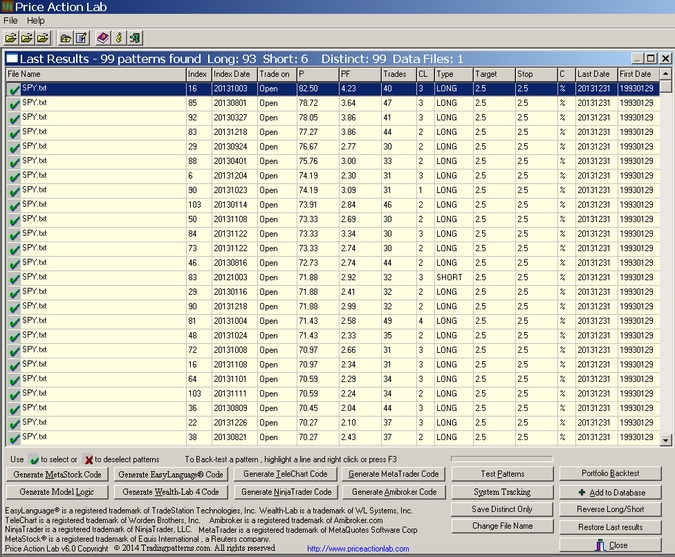

We had to run this on a older dual core 2.40 GHz machine because the other machines were already executing very complex tasks with the program. It took 2 hours and 25 minutes for the program to generate the results running on one CPU core at 100% utilization rate. Below are the results, sorted by highest win rate:

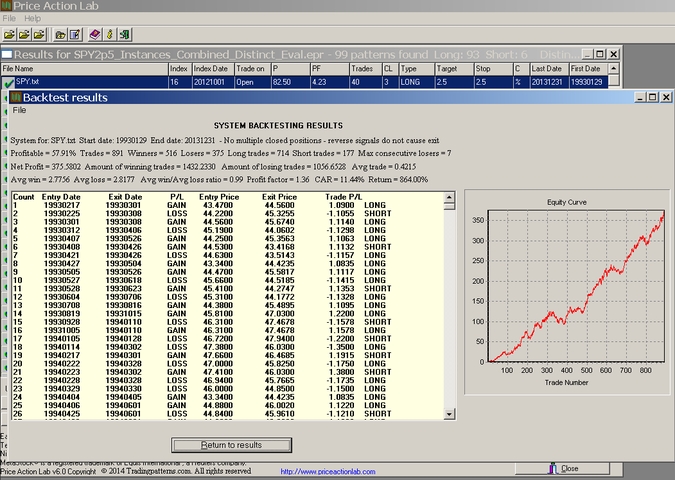

A total of 99 price patterns were found that satisfied the search workspace criteria, 93 long and 6 short. Note that because of the upward bias of SPY many more long patterns are usually found than short, actually 15.5 times more in this case, but the majority are 100% correlated and thus the actual number of long signals is a lot less in comparison. This can be seen after the group of 99 patterns is backtested using the Test Patterns tool while not allowing multiple long and multiple short positions:

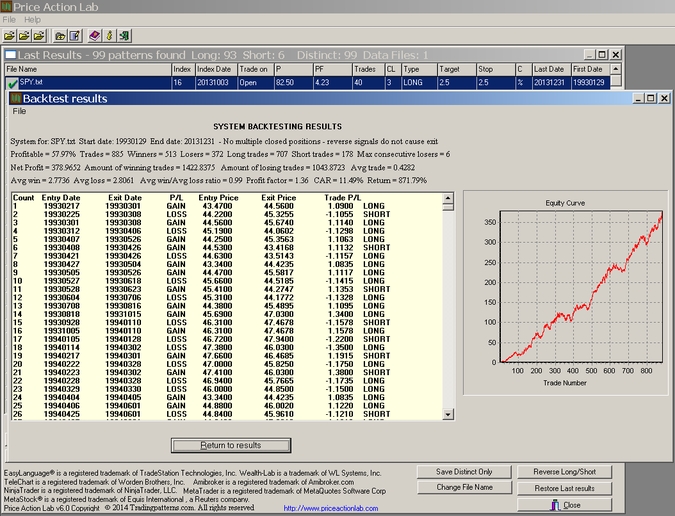

This test shows that the ratio of long to short trades drops down to 4 (707/178) which is reasonable given the upward bias. The CAR is 11.49% and the win rate is 57.97%. A total of 885 trades were placed and the profit factor is 1.36.

Two instance search

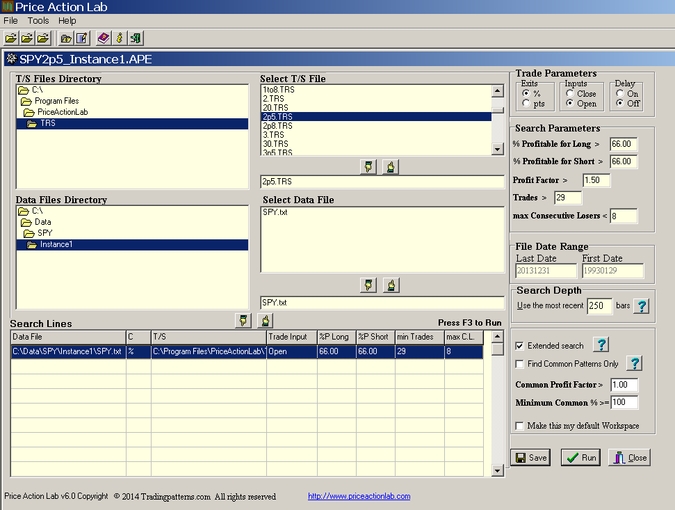

We will next run two instances of the program using two different data files. The first data file will be identical to the one used in the single instance search but the Search Depth will be set at 250. The second file will be reduced by 250 bars and the Search Depth will be also set at 250. The parameters of the two workspaces will stay the same otherwise. After realizing that 250 bars is about a year’s worth of daily data, the second file will end at 12/31/2012.

Workspace for the first instance

Workspace for the second instance

The two instances were started executing simultaneously for all practical purposes. The first generated results after an hour and 16 minutes and the second after an hour and 14 minutes. Thus, the time to execute both was cut nearly by half as compared to the single instance that took about 2 hours and 25 minutes.

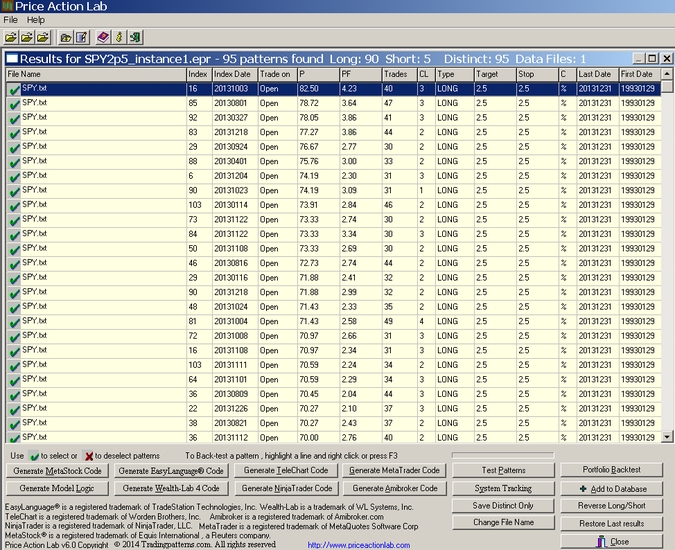

Results of the first instance

This instance found 95 patterns, 90 long and 5 short.

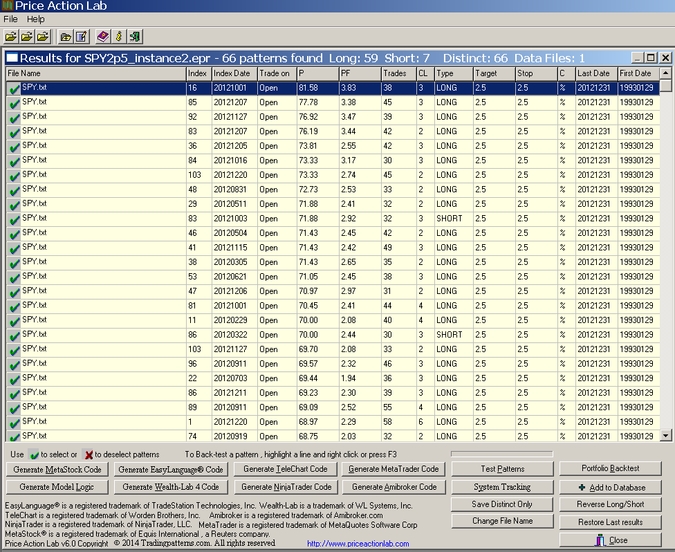

Results of the second instance

This instance found 66 patterns, 59 long and 7 short.

Combining the results of the two instances

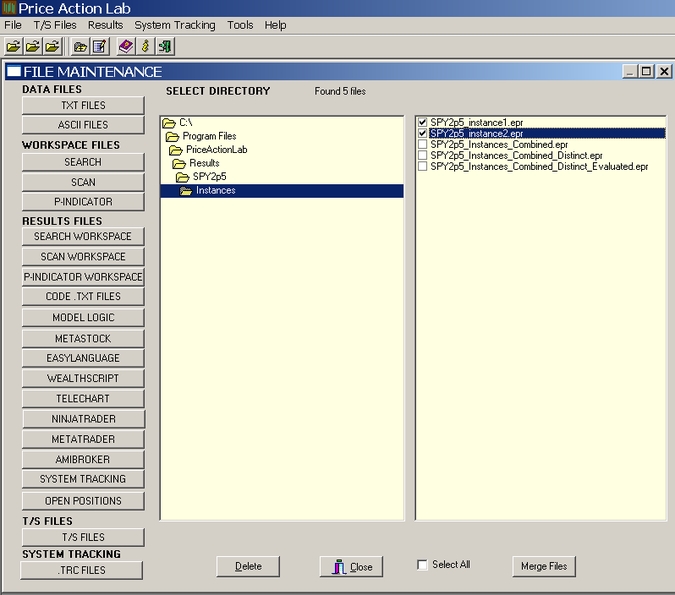

In v6.0 (to be released soon) there is a tool available to combine search workspace results in File Maintenance. In previous versions this can be done with OS commands (Click here for suggestions)

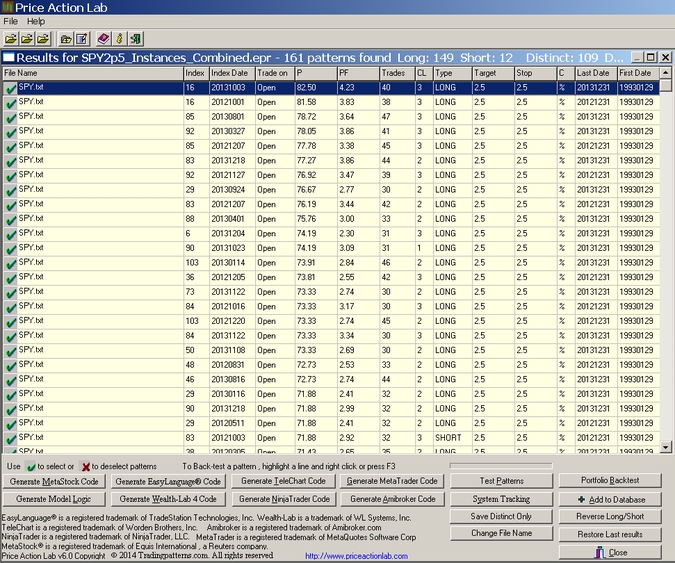

The combined results are saved under a new file name that is next opened and looks like the one below:

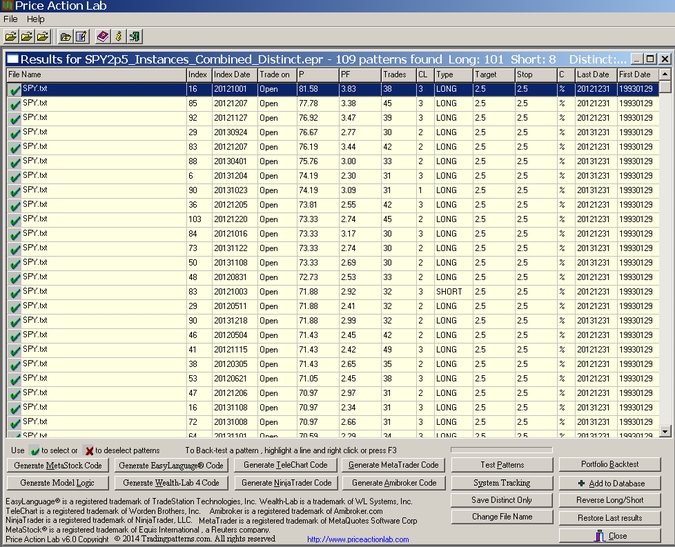

This new results file contains the sum of the results of the two instances, i.e. 95 +66 or a total of 161 patterns. Many of these patterns are identical and the next step is to find only the distinct patterns. This can be done fast with a new tool in v6.0 (in previous versions it can be done manually). After clicking on Save Distinct, a new file is saved by a new name. The new file is shown below:

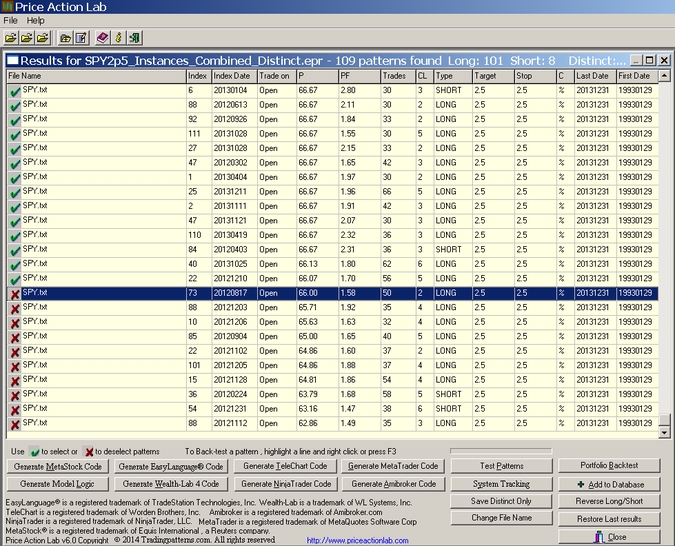

Next, and as mentioned in the beginning of this article, we must remove those patterns generated by the second instance that do not perform well when tested on the whole sample. This is accomplished via the use of the Test Patterns tool (after closing the backtest results). The results are sorted by highest win rate P and the patterns at the bottom of the results with a win rate less than 66%, or profit factor less than 1.5, are unmarked:

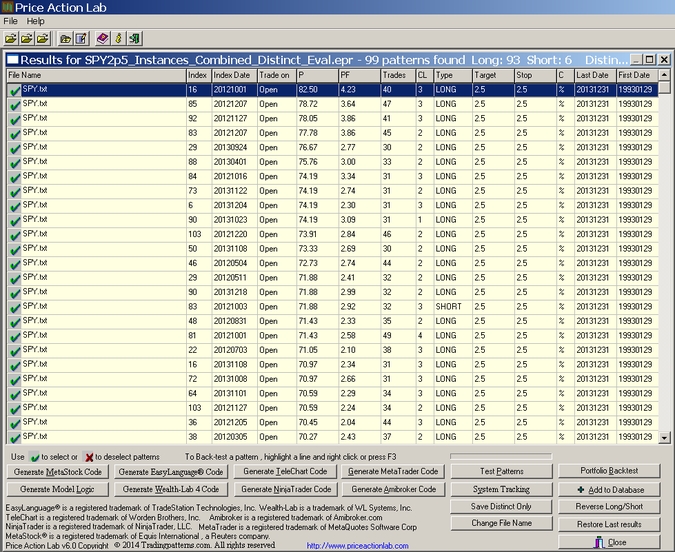

It may be seen that 10 patterns failed the test, 8 long and 2 short. This brings down the total of the remaining valid ones to 99, 93 long and 6 short, which are the exact same numbers obtained by the single instance for the whole sample with 500 bars Search Depth. The valid patterns are then saved to a new file:

The final step is to backtest these patterns using the Test Patterns tool on the whole sample from 01/29/1993 to 12/31/2013 to see if the performance matches that of the single instance search when no multiple positions are allowed:

It may be seen that CAR is 11.44%, only 3 basis points lees that the original single instance result of 11.49%, the profit factor is identical and there is a small variation in the trade numbers possibly because some patterns had to close their position in the reduced file before it reached a target or stop. The difference in trades is a mere 0.7%. The win rate of the combined result is 57.91%, very close to the original result of 57.97%

Conclusion

The procedure described in the article can be used to take full advantage of multiple cores when using Price Action Lab. The extra steps take minimum effort and time compared to the savings in execution time. With an example it was shown that the results obtained are for all practical purposes identical to running single instances with the exemption of a substantial gain in the time taken to complete the search.

Acknowledgement

We would like to thank Steven, an open/lifetime license owner, for reminding of this procedure and motivating us to write this blog.